Data Engineering: Why is it Dynamic in Every Field?

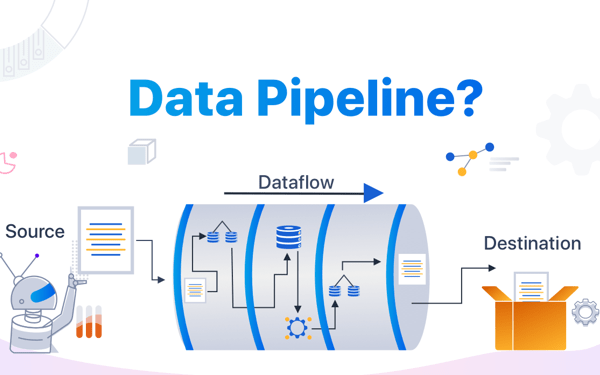

This article delves into the realm of data engineering, answering the question of what it entails and shedding light on its vital role in the contemporary data-driven landscape. It outlines the key responsibilities of data engineers, encompassing data extraction, preparation, pipeline design, and infrastructure management. Emphasizing the symbiotic relationship with data scientists, the article clarifies the distinctions between their roles. It explores the significance of data engineering across various fields, such as informed decision-making, business intelligence, automation, personalization, compliance, innovation, and predictive analytics. The piece concludes by underscoring the escalating demand for data engineers in our increasingly digitized era.